Hi @magnetseven,

Let me see if I understand your question. If we have a network of 30,000 peers you’re asking for a rough estimate of throughput? Is your question related to how many messages have to be passed to ensure that all peers see a given message? Is your question related to how many messages per second each peer can process/forward? Or is your question related to how many messages per second the entire network can process?

The rest of my answer assumes you’re asking about estimating how many messages per second an entire network can process.

There are lots of different attributes that change the answer to all of the above questions. All of the answers are tied to the “connectedness” of the network. Typically gossip networks aren’t “fully connected” meaning that ever peer is connected to every other peer. There is almost always an overlay network of some sort. Ethereum uses what they call “plumtree” overlays which uses a heuristic behavior to spontaneously organize a p2p network into a minimum spanning tree.

Minimum spanning trees connect all peers with the optimal minimum number of links. However, in very large networks, some of those links become “trunks” that connect very large sub graphs of peers. Those trunks pass many more messages than links between peers closer to the “edge” of the graph. In fact, the volume of traffic on the links in a minimum spanning tree typically follow a Pareto distribution meaning that a few links carry much more traffic than all other links and there’s a power law “long tail” of the amount of traffic carried by the other links.

That said, if a “trunk” carries too much traffic, then the “cost” for that link goes up and the peers will seek other links to the other side of the graph and if a better link is found then messages will preferentially flow through that and the structure of the minimum spanning tree changes.

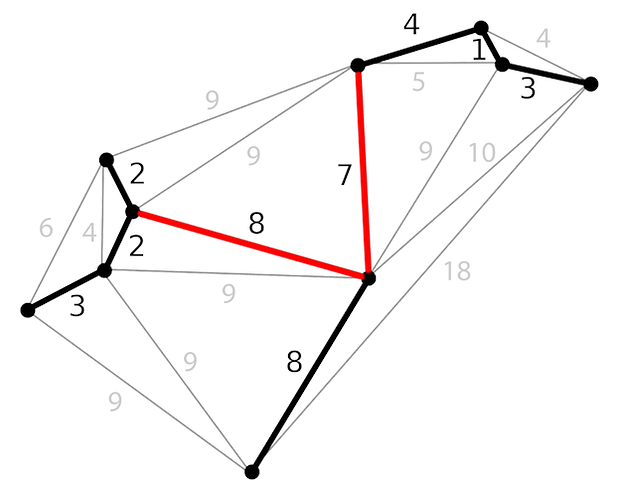

My point in saying this is that the “throughput” in terms of messages per second for the entire p2p network is bound by the throughput on the trunk links in a plumbtree-built minimum spanning tree. So you can estimate your message size and, given your simulated network, estimate the number of messages per second that will be able to pass through the “trunk” links to get the maximum for the whole network. For instance if you look at the graph from the plumtree page, the links I’ve highlighted in red are going to be your “trunks” and their maximum throughput will limit the overall throughput of the whole overlay network.

If you do run a simulation, the way you can find the “trunk” links is to find the peers with the lowest average number of hops between themselves and the other peers in the network. Those peers are in the “middle” of the minimum spanning tree and the links between them and their immediate peers are likely the trunks. You can sort the links by the number of peers that are reachable through each link to get the links with the highest throughput traffic.

I’m not aware of any simulation tool designed to test gossipsub overlay networks specifically. I know there are people using tools like Shadow to do large-scale network tests but I’m unaware of one specifically testing gossipsub. You could build a fairly simple simulation that you plug in numbers for average message size, link throughputs, number of peers and the initial set of links. Then add in the plumtree heuristic for adjusting links so that it will self-organize into a minimum spanning tree and then measure what throughputs are. Obviously this would be fully synthetic and not really a test grounded in reality because it’s missing the noise of internet “weather” (e.g. packet loss, retransmission, etc) but it could be useful for doing rough estimates on networks with very large number of peers.

I did find this fairly recent interesting paper on the resiliency of gossipsub networks. That’s not exactly what you’re asking about but maybe there’s some related information in there.

If you provide more information on what exactly you’re asking, I’m happy to dive into it with you.

Cheers!